Linode Systems Engineer Jon “jfred” Frederickson shares his experience building out the IP whitelisting feature for running Kubernetes on Linode.

As Linode’s engineering team built out our Kubernetes features, we hit plenty of obstacles, as expected. However, one challenge arose that we weren’t anticipating: how to whitelist applications running in Kubernetes on the infrastructure we already have.

Here at Linode, we run several internal applications. Using firewall rules, these apps are accessible only to those networks and systems that need it.

If you have an application with its own database, that database probably only needs to be reachable by instances of that application. If both are running in a Kubernetes cluster, that’s simple enough; NetworkPolicies let you lock that down. On the other hand, If one service is running outside the cluster, things start to get more complicated.

We already had some existing automation around IP whitelisting, but Kubernetes clusters have the potential to be more dynamic than anything else in our infrastructure so far. This is why we needed to ensure we could keep our whitelists up-to-date as nodes were added to and removed from clusters. We figured this must be a fairly common issue for anyone introducing Kubernetes to existing infrastructure, but we didn’t discover any other public solutions to the problem.

The Problem

My challenge was, if I wanted to whitelist all the Nodes in a specific cluster on a server external to the cluster, what would my ideal configuration look like?

Without Kubernetes-aware tooling, I would be forced to manually whitelist cluster nodes’ individual IP addresses one-by-one. Not only is this time-consuming, but it introduces more potential for human error, which is a major hurdle in the development process.

The Solution

Over the next couple weeks I built a solution that (mostly) makes whitelisting a Kubernetes cluster as simple as manually whitelisting a single IP.

We use Salt to manage our infrastructure, and we already had a Salt formula to manage firewall rules. Among infrastructure enrolled in Salt, we already had mechanisms to find systems of a given class when building whitelists, so we were looking for something similar for our Kubernetes clusters.

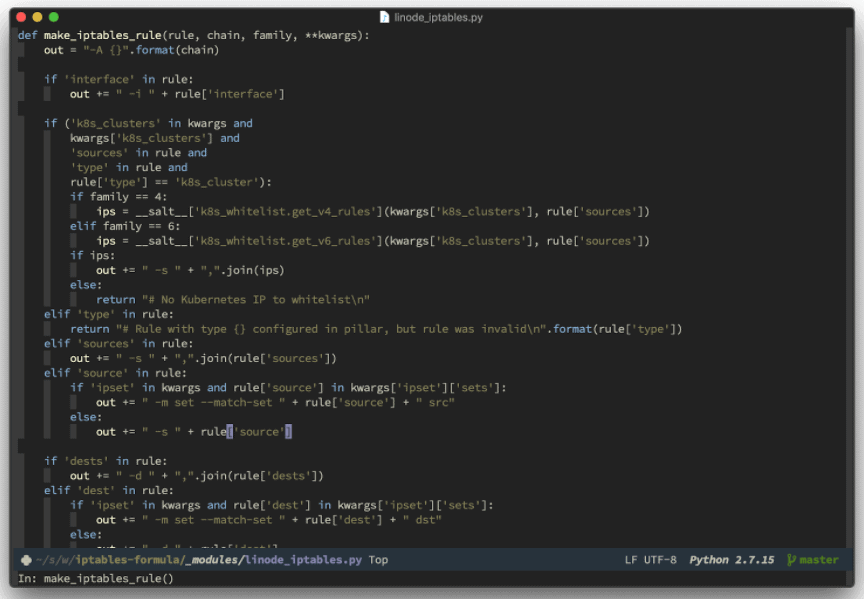

Salt is extremely flexible and makes writing custom modules easy. For example, our iptables formula uses one to generate iptables rules from Salt’s per-node configuration (“pillar” data). We do this by building one rule at a time from a list of rules stored in pillar. It’s a lot of string concatenation, which admittedly does look a bit ugly. Luckily, the iptables rule format is stable enough that it ends up working pretty well. Here’s a snippet of what that looks like:

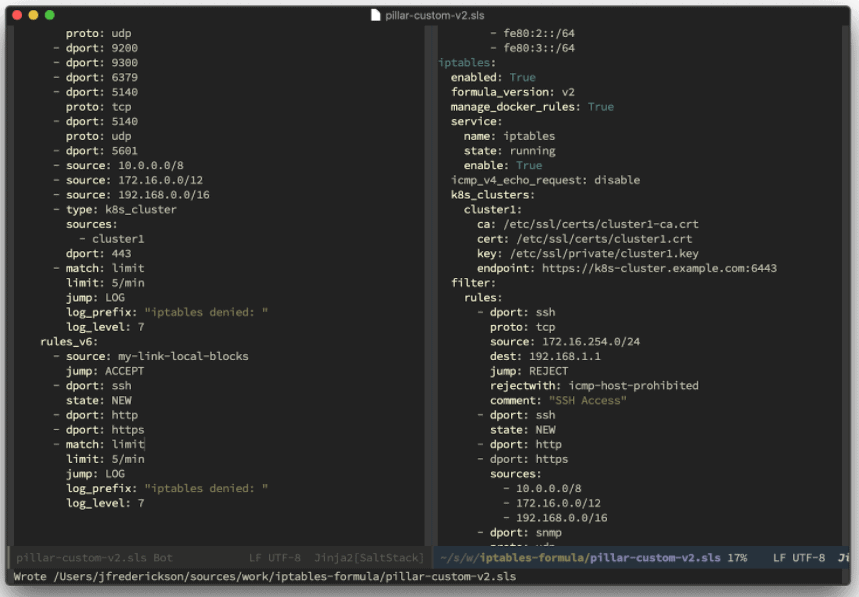

Thanks to Kubernetes’ flexible API, this integration wasn’t that conceptually difficult. Essentially, we added a new rule type for K8s clusters: when the formula encounters a rule of that type, it reaches out to that cluster to fetch the IPs of its nodes, caches them locally, and inserts iptables rules for each IP. The caching is to ensure intermittent network failures don’t cause problems. Here’s what that looks like to use:

What’s next?

Ultimately, I would like to open source the whole formula to develop the tool’s capacity even further with the help of the Linode community.

The next step, though, is to make these rules update automatically as new nodes join and leave a cluster. The goal is for persistently whitelisting a cluster to be as simple as whitelisting a single IP, but we’re not quite there yet.

As the formula is now, it will pick up new nodes only when the firewall configuration is applied, but not automatically. This isn’t too much of an issue, since we can work around this by periodically reapplying the firewall configuration.

However, I would like to figure out a way to make it more reactive. My envisioned mechanism would listen for that event (the creation of a new Node object in Kubernetes) and trigger an update (probably through the Salt event bus). Ideally, this wouldn’t require any manual steps aside from configuring a Kubernetes firewall rule. Maybe once we open source the formula you can help us figure that out!

For now, I’m really happy with what we created and believe it will be an asset to Linode users experimenting with Kubernetes.

Thanks for learning about Linode’s systems engineering process. Please let me know in the comments section if you have any questions about what we did.

Interested in Kubernetes on Linode?

Our team is proud to introduce the beta version of Linode Kubernetes Engine (LKE) – a fully-managed container orchestration engine for deploying and managing containerized applications and workloads.

We need your feedback though. Please sign up for the LKE beta here to give it a try and earn free Linode swag.

Comments (3)

Where’d the RSS feed for the blog go?

JW: The RSS feed can now be found here:

https://www.linode.com/blog/feed/

Thank you 😀