Containerization and Kubernetes are now essential elements for creating scalable cloud-native applications. But not every application workload needs containers, or requires the resources of Kuberentes. Nomad by HashiCorp is a lightweight workload scheduler that provides some of the benefits of Kubernetes, but works with more than just containers. We collaborated with teams at HashiCorp to provide new, quick and streamlined high-availability cluster deployments on Akamai cloud computing services.

Nomad is both an alternative to or addition to Kubernetes. However, Nomad supports more than containers with other tools to provide task drivers, custom “jobs”, or declarative configuration files with a list of tasks or modifications Nomad should complete.

Since not every application can quickly shift to containerization, Nomad merges cloud-native, container-first development with support for legacy applications. This helps dev teams within an organization take advantage of using a workload scheduler without having to completely re-architect their software.

Nomad joins other Marketplace cluster apps to make deploying and configuring highly available, self-replicating clusters as easy as deploying an app on a single instance. Marketplace clusters eliminate manual tasks and additional scripting typically required to set up a high-availability environment. Clusters incur no additional charges and only bill for the resources used.

How It Works

Nomad allows you to deploy and manage both containerized applications and non-containerized legacy applications using a single unified workflow. For example, your application may need Kubernetes for large scale container orchestration, but Nomad can handle standalone applications, including raw binaries, Java Jar files, and QEMU/KVM virtual machines.

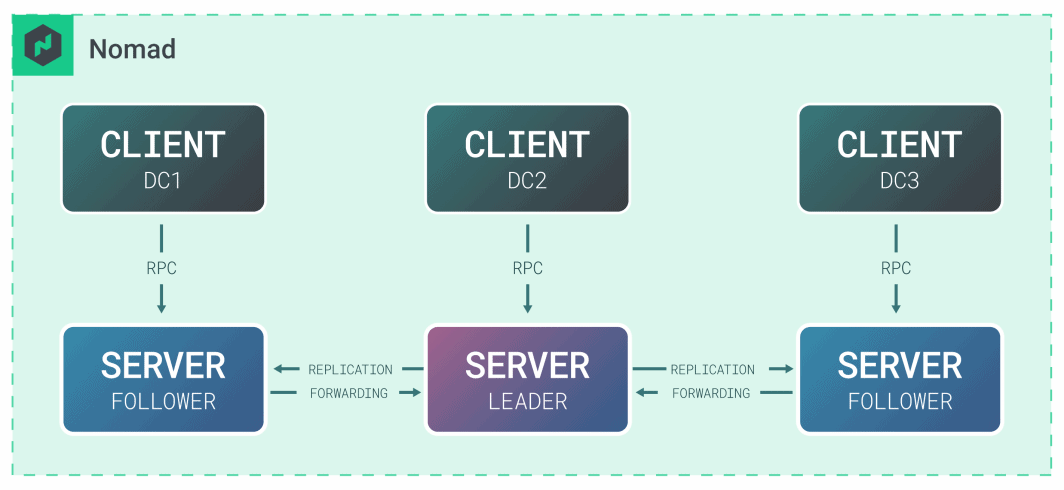

On the backend, Nomad divides responsibilities between Nomad servers and Nomad clients. Servers are the brains of the operation in terms of accepting jobs from users and delegating tasks to clients. Nomad clients are machines that actually run the tasks designated to them. Nomad uses bin packaging for efficient job scheduling and resource optimization, and leverages Consul as a service discovery mesh to run between three and five servers.

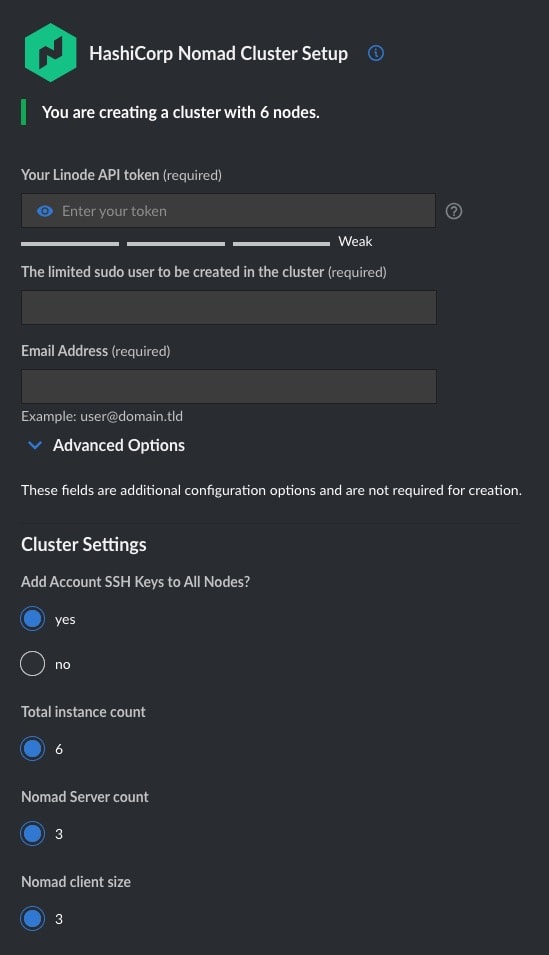

To deploy a cluster using our Marketplace, simply add your Linode API token, the limited sudo user for the cluster, and choose whether your account SSH keys should be added to all nodes. The cluster application deploys three Nomad servers, and three Nomad Clients.

After your installation is complete, you can manage your application’s jobs via the Nomad API or use their UI.

Note: By default, Nomad connects to other cluster members via the first IP detected, so Nomad cluster deployments are limited to one per region. There are no limits for scaling horizontally by using the Nomad Clients Cluster app to add 3, 5, or 7 additional compute instance clients that will auto-join your existing cluster via the consul_nomad_autojoin_token generated by your cluster. Learn more.

For workloads that require multi-region replication or customized configuration, contact our cloud solutions engineers.

For smaller non-production workloads, Nomad is also available as a single instance deployment.

HashiCorp & Akamai

Since adding Nomad and Vault single instance deployment apps to our Marketplace last year, we’ve been collaborating with HashiCorp to make IaC-first and cloud-native deployments easy to manage with Akamai. For more HashiCorp tools on Akamai, check out our Terraform Provider and Terraform guides.

See You at HashiConf!

Attending HashiConf in San Francisco this month? Stop by the Akamai gaming lounge to speak with our team, get swag, and learn more about the Nomad Cluster.

Comments