VLANs and VPCs are methods of network isolation that we use to protect our infrastructure in public clouds. They provide increased security by significantly reducing our network’s attack surface while giving us the ability to segment applications layers with and without public internet access. Today, let’s think bigger and span our private network across multiple regions with Linode.

When we talk about “regions,” we’re referring to distinct geographic areas within the same cloud provider. “Zones” are typically additional hosting locations within those geographic regions. For example, you might see a Northeast region based near New York and a Southeast region in Atlanta, each containing multiple zones.

In addition to delivering lower latency by being physically closer to more users, running a multi-region application gives us a significant increase in reliability and fault tolerance. Anything that can impact your workloads in one location, including hardware failure or local network outages, can potentially be mitigated by having another location to reroute your users to.

Deploying Multi-Region VLANs

To route across VLAN segments deployed in multiple regions, we can tie VLAN segments together using a Virtual Private Network (VPN).

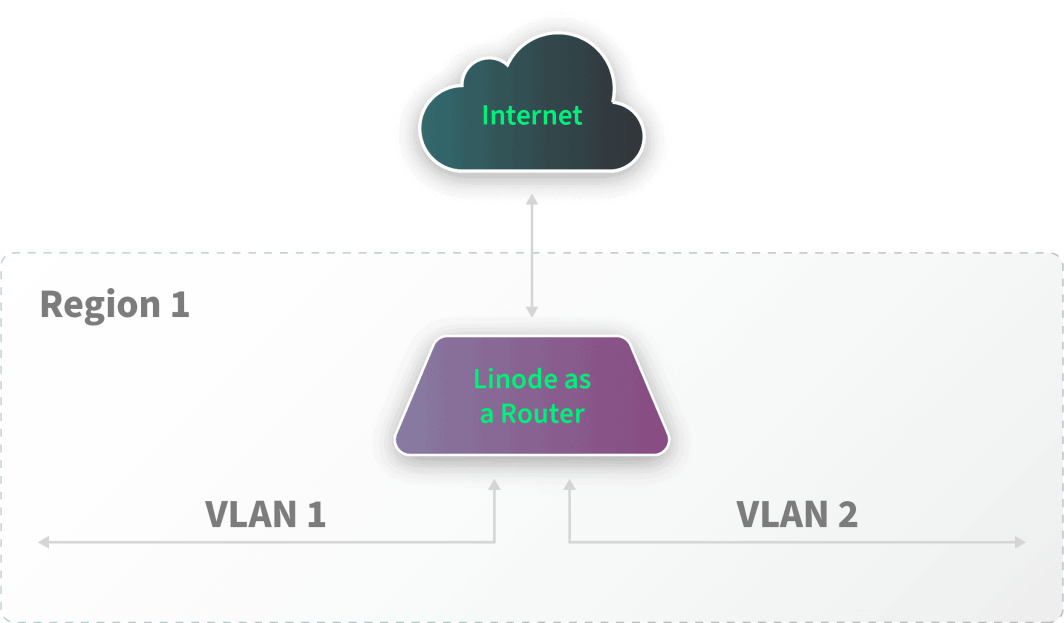

First, we tie together any associated VLANs deployed in a single region using a Linode acting as a common router. Each VLAN segment is its own isolated layer-2 domain and operates within its layer-3 subnet. All traffic between the various VLAN segments will flow through the router, and we can place firewall rules within the router to govern what traffic is allowed to traverse between the multiple segments.

We can then configure this router instance to bridge traffic between other network segments using the public internet and VPN software like WireGuard or a protocol like IPSec.

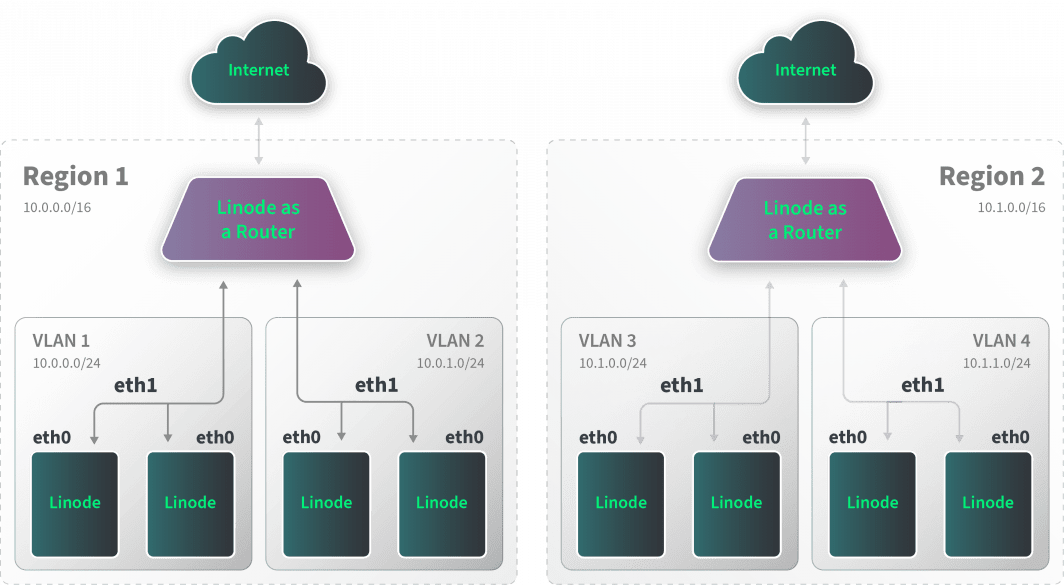

The example above shows a two-region deployment. Each region is responsible for managing connectivity between two isolated VLANs through a router instance. Each router can then bridge the multiple regions locally using the Linode router instances we configure with multiple interfaces. The routers span the regions by using WireGuard tunnels over the public internet to each region.

Configuring NAT Exit Points

Traffic can now flow between any VLAN regardless of region. In addition, the router instances can be used as Network Address Translation (NAT) exit points providing internet connectivity for their local VLANs if we deploy them without local internet connectivity. In this configuration, the local router instance would be designated as the default gateway (i.e., typically configured as 10.0.0.1 on a 10.0.0.0/24 network). We also can use the router instances as Secure Socket Shell (SSH) management bastions.

A common way to implement this kind of NAT configuration is to use a firewall rule to mark WireGuard traffic and IP masquerading for any traffic detected without this mark.

For example, the router would be configured to use an iptables rule:

iptables -t nat -A POSTROUTING -o eth0 -m mark ! --mark 42 -j MASQUERADE

We can configure WireGuard to use a FirewallMark (i.e., 42) within its configuration. This configuration ensures that the WireGuard traffic is not NATed while all VLAN traffic is NATed.

The cloud firewall rules would then get configured to allow the WireGuard communication between routing nodes (typically, udp/51820).

We then can configure the router instances with firewall rules to control or record traffic flow across the local and global segments as necessary.

Considerations

The deployment in this example allows sharing of data globally across multiple regions and empowers the router instances to control the traffic flow between various VLAN segments. When funneling traffic from multiple VLAN segments into a single aggregation point, it is critical to understand the performance and bandwidth considerations when doing so. The performance will be determined by the upload bandwidth restrictions imposed by the compute resources allotted to the router.

It’s also critical to carefully consider the VPN protocol to ensure it meets the requirements of your deployment. The technology you select will have a major impact on point-to-point bandwidth and the security of traffic sent over the public internet. WireGuard, for example, uses cryptography to ensure that traffic cannot be intercepted and has a smaller trusted computing base when compared to an IPsec implementation like strongSwan to limit exploits and exposure.

Multicloud

The same kind of technology we use to span across multiple regions could be implemented across various cloud providers. For example, you can place a router instance within another cloud service provider’s network boundary and tie it into its local, cloud provider-specific VPC configuration. You can use a WireGuard tunnel between the router instance to bridge into the cloud provider network. The implementation works well for services designed to remain isolated in a private network exclusively.

What’s Next

Ultimately, there are a lot of different tools to work with when designing our private network and the benefits can significantly outweigh the added complexity. If your application is growing along with your user base, designing your environment to reduce latency for a larger number of those individuals can have a major impact on user experience. The additional fault tolerance will increase reliability and keep your software available and accessible.

Comments